Fairly biased

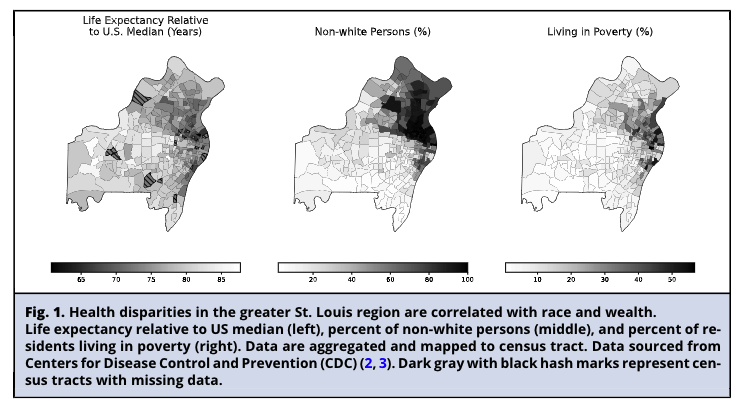

Health equity through a journalism and data science lens

2023-09-27

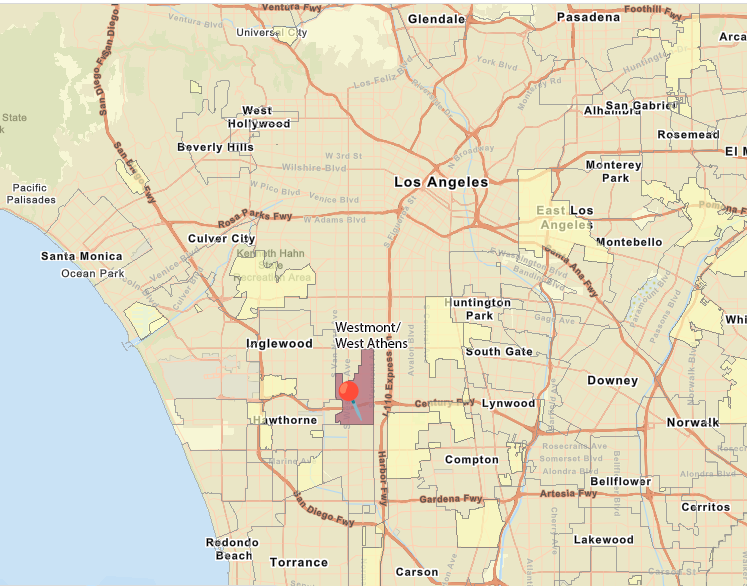

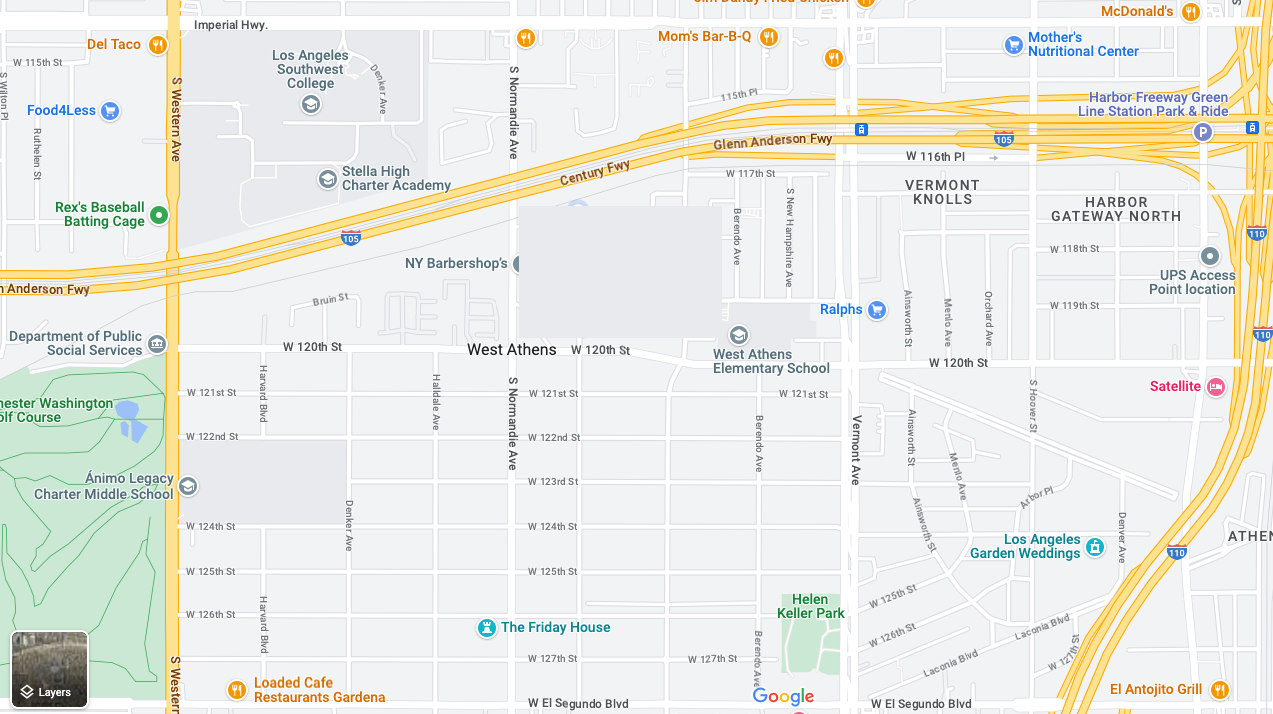

Welcome to West Athens

Fun fact: USC isn’t the southern border of Los Angeles

Grocery/pharmacy run

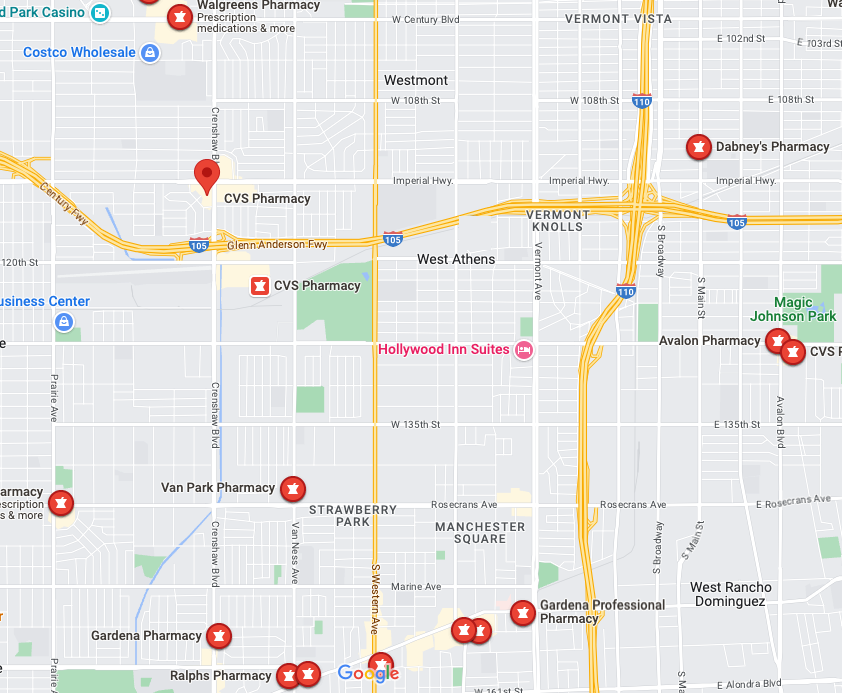

Where’s the pharmacy?

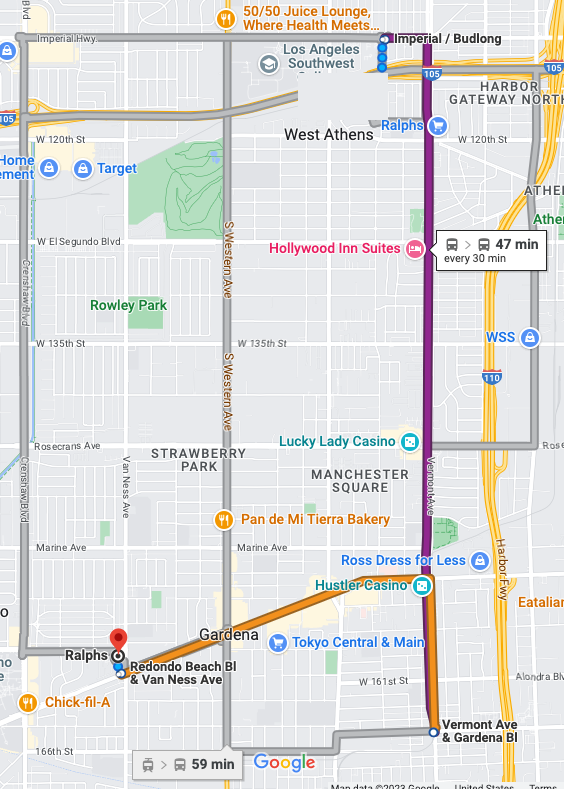

No car?

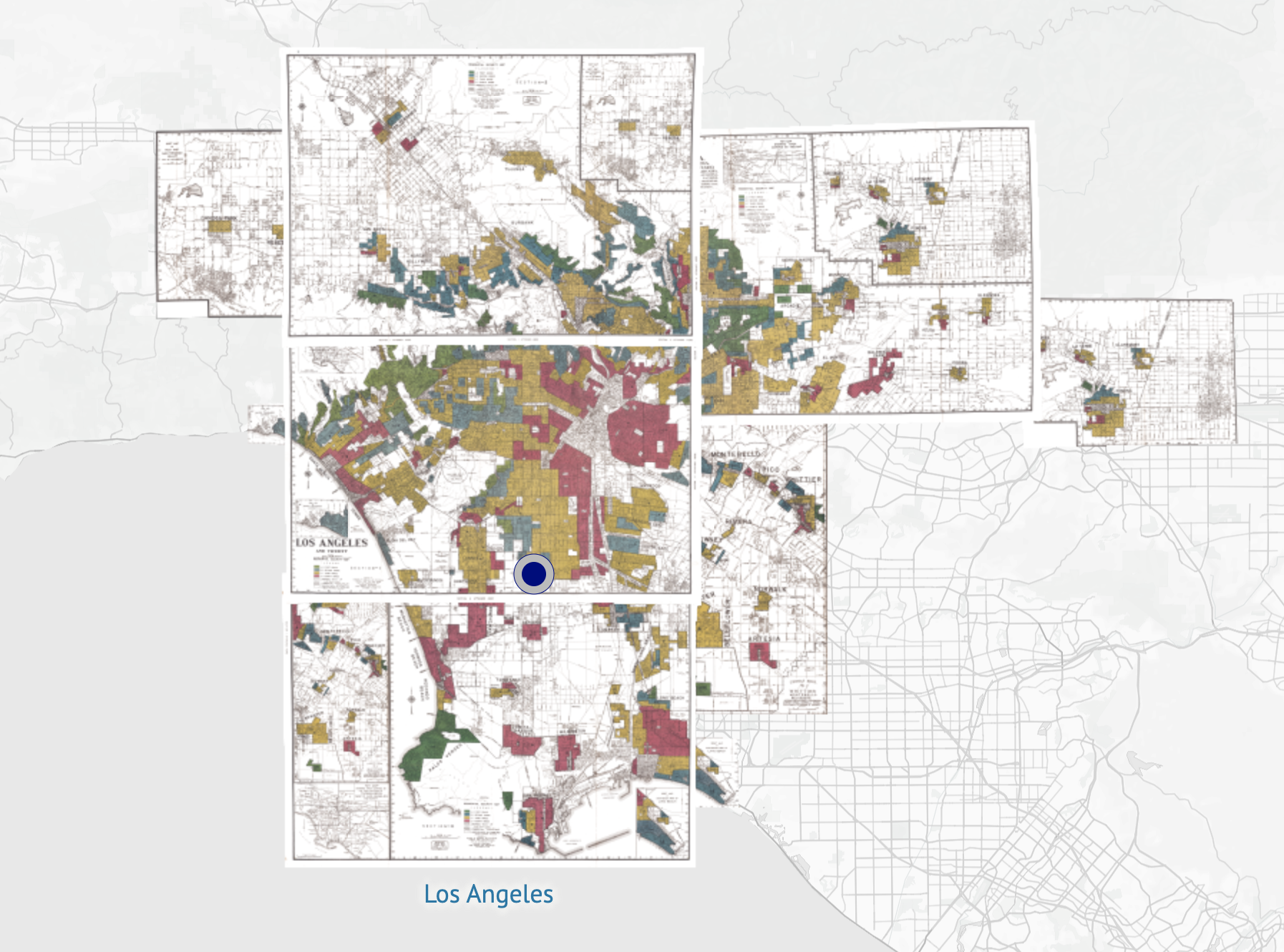

All routes point to our past

L.A. air quality vs. L.A. redlining

L.A. air quality vs. L.A. COVID vulnerability

How do you find your inequity topic?

Compare your ‘normal’ with the ‘normal’ of others

Switch up your route to work or the store

Follow up on something surprising you heard

Go with your natural curiosity

Passionate about a topic? Get exploring

Know a personal blindspot? Challenge yourself

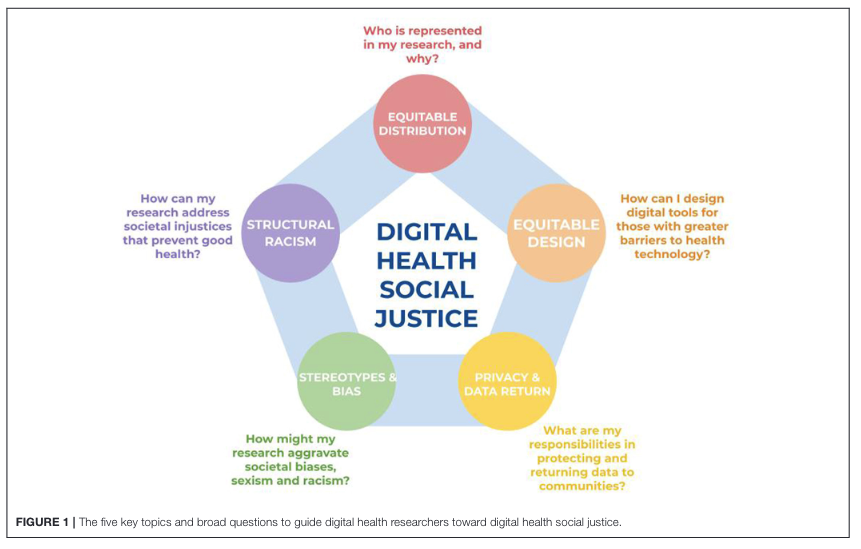

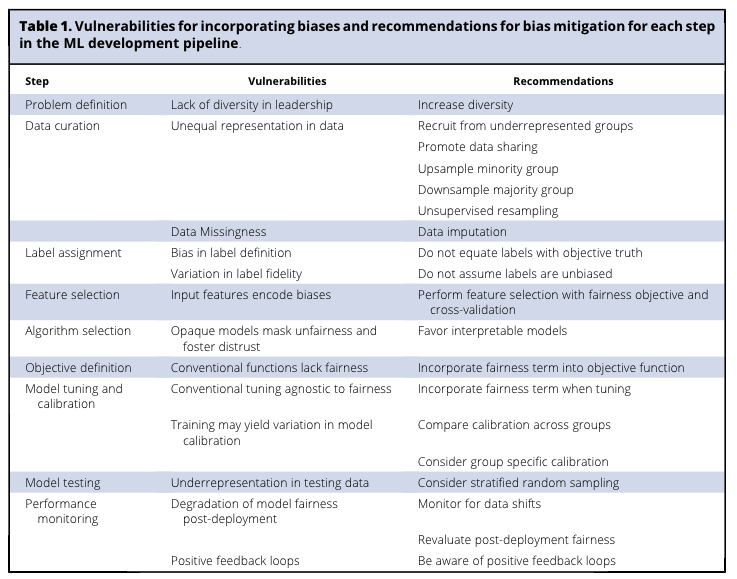

Let’s talk data science

There’s bias around every corner

Antiquated attitudes

Non-representative sampling

Exclusionary methodologies

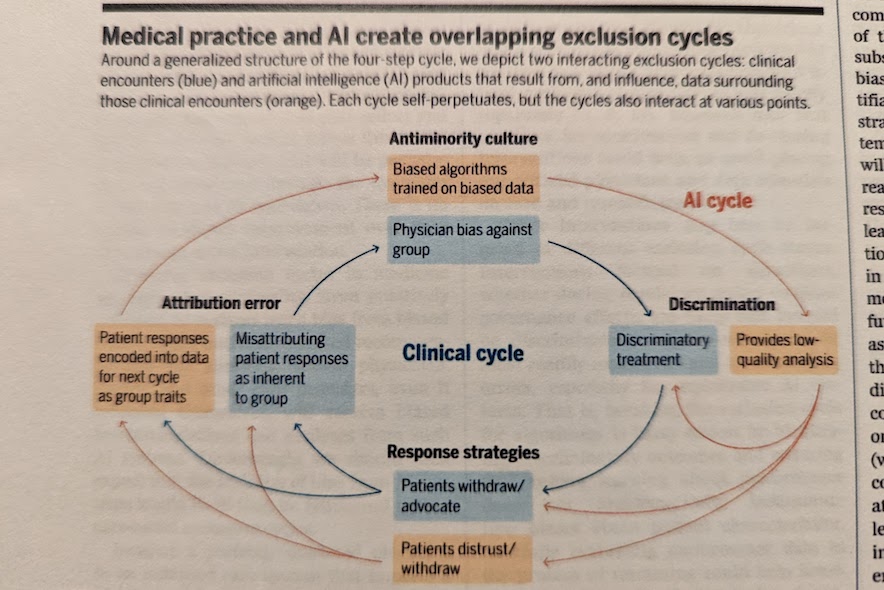

Uninterpretable AI models

“Even if AI systems are designed by unbiased coders striving for neutrality, those systems derive data from and exist within a medical system that has its own antiminority culture; those views are embedded in the patters that AI learns.”

Race is more than a data column

Race is more than a data column

Even when race is removed from a dataset, statistical models can reliably predict a patient’s race from the way the physician wrote about them. (Keeling et al)

If bias is already creeping through, a statistical model will only exacerbate it.

Questions to explore